- The Next Input by Cylentis AI

- Posts

- 🎮 The Next Input — Issue #131

🎮 The Next Input — Issue #131

The xAI Exodus: Founders Quit Amid Merger

⚡ The Briefing — 60 sec

xAI lays out interplanetary ambitions in public all-hands Public all-hands about interplanetary ambition while co-creators are exiting left and right. It’s starting to feel a little… Upside Down.

AI poised to disrupt business software, alter enterprise stack Proof that you can’t take every AI claim at face value. If any enterprise is seriously running OpenClaw, all I can say is — good luck.

AI chatbots expand into mental health support in Nigeria You have to wonder whether future health-focused AI releases will address this properly. Mental health demands special care — and chatbots need serious stress testing before they step into that arena.

🛠️ The Playbook — The AI Risk Triage Engine

Mission Evaluate AI initiatives before they create reputational, operational, or ethical blowback.

Difficulty Advanced

Build time 2–3 hours

ROI Reduces strategic missteps and prevents public credibility damage.

0) Why This Matters

Ambition is loud. Disruption headlines are louder.

But AI systems don’t fail quietly — they fail publicly.

Whether it’s enterprise software swaps or mental health chatbots, the cost of moving fast without structured evaluation is measured in trust.

This engine forces discipline before deployment.

1) Architecture

Component | Tool | Purpose | Owner | Failure mode |

|---|---|---|---|---|

Strategy draft | Claude 4.5 Sonnet | Outline AI initiative and intended outcomes | Product | Overstated capability |

Risk enumerator | GPT-5-mini | Identify technical, legal, and ethical risks | Analyst | Surface-level analysis |

Scenario stressor | Claude 4.5 Haiku | Model edge cases and worst-case outcomes | Reviewer | Missed downstream consequences |

Evidence binder | Perplexity Pro | Ground assumptions in real-world precedent | Ops | Unverified assumptions |

Approval gate | Human committee | Final risk sign-off | Exec | Rubber-stamp approval |

2) Workflow

Define the initiative: Draft the exact AI use case, target users, and deployment environment.

Enumerate risks: GPT-5-mini lists operational, regulatory, reputational, and ethical risks.

Stress test: Claude 4.5 Haiku models failure scenarios and unintended consequences.

Precedent check: Perplexity Pro gathers real-world case studies or regulatory responses.

Mitigation plan: Convert risks into explicit controls or guardrails.

Executive sign-off: No launch without documented risk acknowledgment.

3) Example Prompts

Risk Enumeration (GPT-5-mini)

List all potential risks associated with this AI deployment.

Include:

- operational risks

- regulatory exposure

- reputational impact

- ethical concerns

Return a categorized list.

Stress Test (Claude 4.5 Haiku)

Assume this AI system fails publicly.

Describe:

- the failure mode

- who is impacted

- likely media narrative

- regulatory response

Mitigation Builder

For each identified risk:

- propose a specific control

- assign an owner

- define a measurable safeguard

Return as a table.

4) Guardrails

No deployment without documented risk assessment.

High-stakes domains (health, finance, education) require expanded review.

If public trust is at risk, human oversight is mandatory.

Marketing claims must match tested capability.

5) Pilot Rollout — 3 hours

Select one current or planned AI initiative.

Run full risk enumeration + stress test.

Document mitigation strategies.

Present findings to decision-makers.

Adjust deployment plan accordingly.

Make this review step mandatory pre-launch.

6) Metrics

Documented risks per initiative

Mitigation coverage ratio

Post-launch incident count (target = zero)

Regulatory inquiries

Public trust indicators (qualitative feedback)

Pro Tip: If you can’t articulate the downside clearly, you don’t understand the upside.

🎯 The Arsenal — Tools & Platforms

Claude 4.5 Sonnet · Structured strategy drafting · https://anthropic.com

GPT-5-mini · Rapid structured risk enumeration · https://openai.com

Perplexity Pro · Grounding assumptions in real-world cases · https://perplexity.ai

Notion · Risk log and mitigation tracking · https://notion.so

Copy-paste prompt block:

Before launching this AI initiative:

List every material risk.

Stress test failure scenarios.

If public trust is exposed, say so.

No optimism bias.

💡 Free Office Hours

Want help implementing this? Book a free 15-minute Office Hours slot — no sales pitch, just workflows solved.

Better prompts. Better AI output.

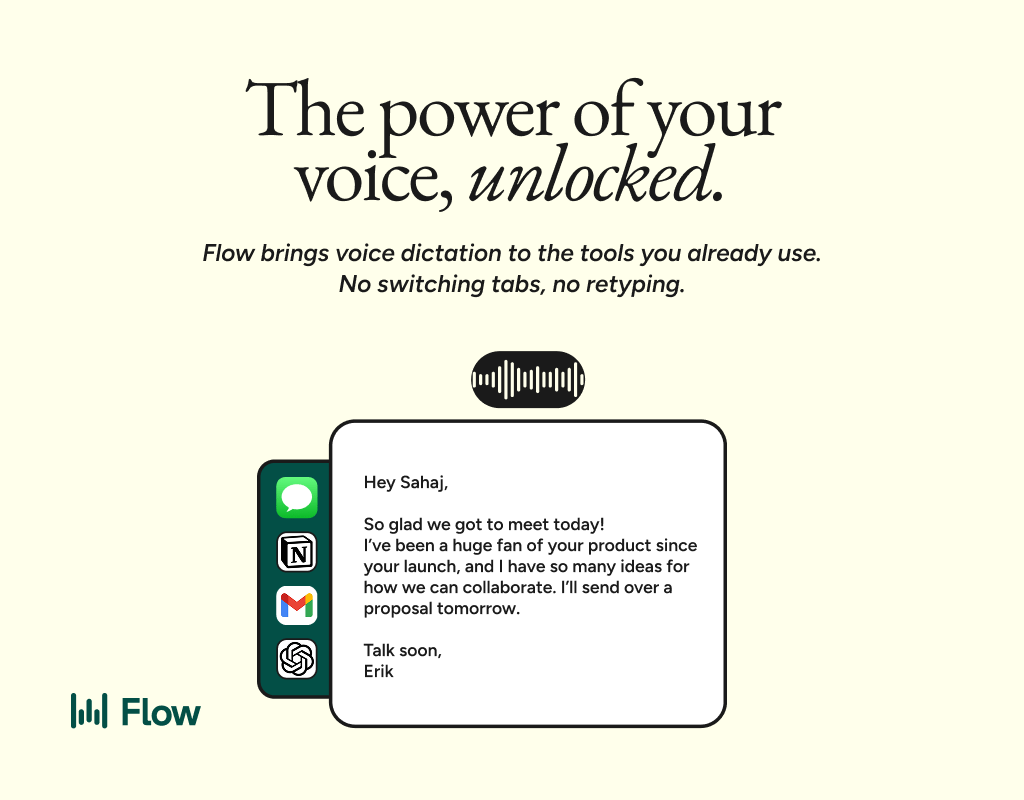

AI gets smarter when your input is complete. Wispr Flow helps you think out loud and capture full context by voice, then turns that speech into a clean, structured prompt you can paste into ChatGPT, Claude, or any assistant. No more chopping up thoughts into typed paragraphs. Preserve constraints, examples, edge cases, and tone by speaking them once. The result is faster iteration, more precise outputs, and less time re-prompting. Try Wispr Flow for AI or see a 30-second demo.

🕹️ Game Over

Ambition scales. Risk compounds.

— Aaron Automating the boring. Amplifying the brilliant.

Subscribe: https://cylentisai.beehiiv.com/subscribe