- The Next Input by Cylentis AI

- Posts

- 🎮 The Next Input — Issue #173

🎮 The Next Input — Issue #173

Why the Oscars Just Banned AI

⚡ The Briefing — 60 sec

AI-generated actors and scripts are now ineligible for Oscars Art and AI? Judges say they don’t mix. The Academy is drawing a hard line: humans can use tools, but the prize still belongs to human performance and human authorship.

Australia ‘sleepwalking’ into AI job losses as expert warns of looming threat New week. New existential crisis vis-à-vis your job and AI. The warning is not that everything disappears overnight — it is that white-collar work keeps getting quietly eaten one workflow at a time.

Spotify adds ‘Verified’ badge to distinguish human acts from AI The provenance wars are moving into music now. If everything can be generated, platforms are going to start making “made by humans” part of the label, the pitch, and probably the status game too.

🛠️ The Playbook — The Human Provenance Engine

Mission

Build AI workflows that clearly separate human-made, AI-assisted, and AI-generated work so trust does not collapse when synthetic content floods the room.

Difficulty

Intermediate

Build time

3–5 hours

ROI

Cleaner trust signals, lower reputational risk, and a stronger way to use AI without blurring ownership, authorship, or accountability.

0) Why This Matters

The next AI fight is not just about capability.

It is about authorship.

The Oscars are saying AI-generated actors and scripts are not eligible for awards. Spotify is reportedly adding verified badges to distinguish human artists from AI acts. Meanwhile, workers are being told that AI is coming for the tasks, the workflows, and eventually the roles.

Same pattern everywhere:

who made this?

who owns this?

who gets credit?

who gets replaced?

who is accountable when the output lands?

This is not anti-AI. It is anti-confusion.

The winning organisations will not be the ones pretending AI was never used. They will be the ones that can clearly say where AI helped, where humans decided, and where the final responsibility sits.

1) Architecture

Component | Tool | Purpose | Owner | Failure mode |

|---|---|---|---|---|

Content intake | Docs / CMS / asset library | Captures drafts, media, scripts, reports, and outputs | Operations | No record of origin |

AI usage log | Airtable / database / metadata | Tracks whether AI was used and how | Workflow owner | AI use becomes invisible |

Provenance tag | CMS field / document label | Labels work as human-made, AI-assisted, or AI-generated | Content lead | Blurred authorship |

Review layer | Human editor / approver | Validates quality, authorship, and risk | Team lead | Rubber-stamp approval |

Evidence layer | Source links / notes / prompt history | Shows what informed the final work | Operator | Unsupported or unattributed claims |

Publishing layer | Website / Spotify / awards / client portal | Displays the correct trust signal | Marketing / Ops | Public trust breaks |

2) Workflow

Identify the types of work where authorship matters: reports, scripts, articles, music, images, client deliverables, or public-facing content.

Add a simple provenance field to each asset: human-made, AI-assisted, or AI-generated.

Require contributors to state how AI was used before the work is reviewed or published.

Route sensitive or public-facing assets through a human review step.

Attach sources, notes, or prompt history where evidence and accountability matter.

Publish with the right label so users, clients, or audiences know what they are looking at.

3) Example Prompts

Provenance Classifier

You are classifying the provenance of a piece of work.

Review the description below and classify it as:

- human-made

- AI-assisted

- AI-generated

Then explain:

1. what role the human played

2. what role the AI played

3. whether disclosure is recommended

4. what evidence should be retained

Description:

[insert description]

AI Usage Disclosure Prompt

You are preparing a clear AI usage disclosure.

Given the workflow below:

- explain how AI was used

- explain what humans reviewed or changed

- explain who is responsible for the final output

- keep the wording plain and non-defensive

Workflow:

[insert workflow]

Human Review Prompt

You are reviewing AI-assisted work before publication.

Check:

- whether authorship is clear

- whether claims are supported

- whether the human contribution is meaningful

- whether disclosure is required

- whether the output should be approved, revised, or rejected

Return a short decision.

Workforce Impact Prompt

You are reviewing a role for AI exposure.

For the role below:

- identify which tasks are most likely to be automated

- identify which tasks still require human judgment

- identify where AI assistance could improve work

- identify what skills the person should build next

Role:

[insert role]

4) Guardrails

Do not hide AI involvement where authorship or trust matters.

Do not label AI-generated work as human-made.

Keep humans accountable for final public-facing outputs.

Separate AI assistance from AI authorship.

Retain source notes, prompt history, or evidence for high-trust work.

Treat provenance as operational hygiene, not moral panic.

5) Pilot Rollout — 3 hours

Pick one public-facing workflow where trust and authorship matter.

Add three provenance labels: human-made, AI-assisted, AI-generated.

Create a short disclosure template for contributors.

Add a human review step before publication or delivery.

Run 10 real assets through the workflow and check whether the labels are clear.

Refine the rules before expanding to other content or client workflows.

6) Metrics

Percentage of assets with provenance labels

Number of AI-assisted outputs reviewed by humans

Disclosure completion rate

Unsupported-claim rate

Public-facing correction rate

Time added by provenance review

Trust score from clients, users, or reviewers

Pro Tip: The future is not “AI or human.” The future is knowing exactly where the machine helped and where the human still owns the call.

🎯 The Arsenal — Tools & Platforms

Airtable · simple provenance register for assets, labels, owners, and review status · Airtable

Google Sheets · lightweight tracking for AI usage, disclosure, and approval metrics · Google Sheets

ChatGPT / Claude · useful for drafting, classification, and review, as long as final authorship stays clear · ChatGPT · Anthropic

CMS metadata fields · practical place to store human-made, AI-assisted, and AI-generated labels

Human review queues · boring, necessary, and suddenly very relevant when synthetic work starts competing for human trust

Copy-paste prompt block:

You are helping me build a Human Provenance Engine.

For the workflow below:

1. identify whether the output is human-made, AI-assisted, or AI-generated

2. identify where human judgment is required

3. identify what AI contributed

4. identify whether disclosure is required

5. identify what evidence or prompt history should be retained

6. design a review process

7. define the key metrics to track

Workflow:

[insert workflow here]

Return the answer in markdown with sections for:

- Workflow summary

- Provenance classification

- Human contribution

- AI contribution

- Disclosure requirement

- Review process

- Metrics

💡 Free Office Hours

If your organisation is using AI but has no clean way to explain authorship, ownership, or trust, I run free office hours to help map the workflow and build a provenance layer that actually holds up.

Book here: https://calendly.com

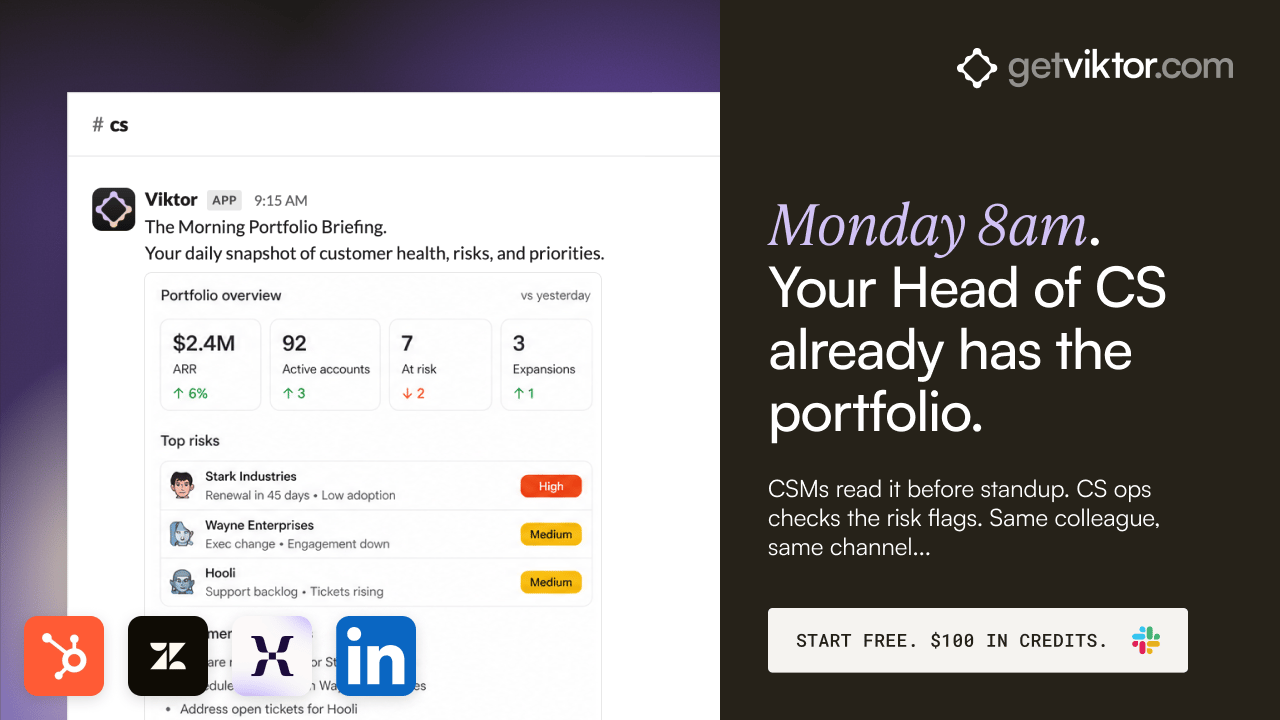

Treat Every Account like your Top 10

Every CS team has a top tier. The strategic accounts that get briefed QBRs, fast escalations, executive sponsors checking in mid-quarter. The other 190 get a generic quarterly email and a renewal scramble in week 11.

What if your top-tier playbook ran across your entire book? Every QBR briefed. Every renewal flagged 60 days out. Every usage drop surfaced before the CSM notices. Every sponsor change flagged the day it happens on LinkedIn.

That's what your CS team gets when there's a colleague in Slack reading the portfolio every morning, drafting every QBR brief, and watching the health signals around the clock. Your CSMs talk to customers. The prep work runs in the background.

11,000+ teams use Viktor daily. SOC 2 certified. Your data never trains models.

"Viktor is now an integral team member." Patrick O'Doherty, Yarra Web

🕹️ Game Over

AI can help make the thing. That does not mean it gets the trophy.

— Aaron Automating the boring. Amplifying the brilliant.

Subscribe: link